I've spent several weeks working through a wide variety of Oracle database integration use cases with Entra ID for the express purpose of methodically deriving all possible error codes that that a customer might encounter during an evaluation. I will list each error followed by the one or more potential causes.

Before getting started with the errors, know that you can enable client and server side tracing to reveal underlying root causes of errors.

Client-side Trace for SQL*Plus

Either add the following to sqlnet.ora or set as environment variables the following before attempting to connect to the database server:

Note that 15 is for high level tracing and 14 for low level tracing.

Database Server Side Trace for non-autonomous databases

Set the following in the CDB:

SQL> ALTER SYSTEM SET EVENTS 'TRACE[TOKEN] DISK=HIGHEST';

Database Server Side Trace for autonomous databases

Review the alert log. For example:

SQL> SELECT FROM V$DIAG_ALERT_EXT ORDER BY originating_timestamp desc;

SQL> SELECT * FROM UNIFIED audit_trail ORDER BY event_timestamp desc fetch first 100 rows only;

SQL> CREATE AUDIT POLICY ORA LOGON_SUCCESSES ACTIONS LOGON;

SQL> AUDIT POLICY ORA_LOGON_SUCCESSES WHENEVER SUCCESSFUL;

Error Number Documentation

Note that most errors provide additional help at the documentation page for the respective error code.

For example : https://docs.oracle.com/error-help/db/ora-<number>

Database Name Resolution

Database name resolution is typically provided by the explicit connect string, exclusively tnsnames.ora for JDBC-thin driver clients, a combination of sqlnet.ora and tnsnames.ora for JDBC-thick and OCI (C-based) client drivers, or LDAP-based name services. Information on LDAP-based database name services are available at the following blog post:

https://www.braddiggs.com/2023/10/oud-12cps4-use-case-oracle-database.html

Best Practice: Regardless of the name resolution repository, the best practice is to include all elements of connection in either the explicit connect string or TNS entry.

Here is a human friendly formatted TNS entry for database PDB1. The blue font items are required for the encrypted TCPS TLS connection. TLS connections between client and server are required for Entra ID integration. The red font items are unique to Entra ID connections.

PDB1 =

(DESCRIPTION=

(ADDRESS=(PROTOCOL=TCPS)(HOST=hrdb.dbauthdemo.com)(PORT=2484))

(SECURITY=

(SSL_SERVER_DN_MATCH=TRUE)

(WALLET_LOCATION=SYSTEM)

(TOKEN_AUTH=AZURE_INTERACTIVE)

(TENANT_ID=7f4c6e3e-a1e0-43fe-14c5-c2f051a0a3a1)

(CLIENT_ID=e5124a85-ac3e-14a4-f2ca-1ad635cf781a)

(AZURE_DB_APP_ID_URI=https://dbauthdemo.com/16736175-ca41-8f33-af0d-4616ade17621)

)

(CONNECT_DATA=

(SERVER=DEDICATED)

(SERVICE_NAME=pdb1)

)

)

Here is the equivalent explicit connect string being called with the connect SQL command:

SQL> connect (DESCRIPTION=(ADDRESS=(PROTOCOL=TCPS)(HOST=hrdb.dbauthdemo.com)(PORT=2484))(SECURITY=(SSL_SERVER_DN_MATCH=TRUE)(WALLET_LOCATION=SYSTEM)(TOKEN_AUTH=AZURE_INTERACTIVE)(TENANT_ID=7f4c6e3e-a1e0-43fe-14c5-c2f051a0a3a1)(CLIENT_ID=e5124a85-ac3e-14a4-f2ca-1ad635cf781a)(AZURE_DB_APP_ID_URI=https://dbauthdemo.com/16736175-ca41-8f33-af0d-4616ade17621))(CONNECT_DATA=(SERVER=DEDICATED)(SERVICE_NAME=pdb1)))

Error Messages:

Here are the list of errors that I've encountered while troubleshooting Entra ID integration issues.

Error: ORA-01017: invalid username/password; logon denied

Potential reasons for this error:

- Invalid username or password provided

- The server side configuration of the target database of the client connection (CDB or PDB) may not yet be configured for Entra Integration including setting IDENTITY_PROVIDER_TYPE to AZURE_ID and applying Entra ID integration to IDENTITY_PROVIDER_CONFIG

- The connection string or TNS entry of the client may not include all requisites for Entra ID integrated database

- Use the following to determine if IDENTITY_PROVIDER_TYPE is set to AZURE_ID:

SQL> SELECT NAME, VALUE FROM V$PARAMETER WHERE NAME='identity_provider_type';

- Use the following to determine if IDENTITY_PROVIDER_CONFIG has the correct values for tenant_id, application_id_uri (e.g. database app URI), and app_id (e.g. database app registration's application ID).

SQL> SELECT NAME, VALUE FROM V$PARAMETER WHERE NAME='identity_provider_config';

Error: ORA-12154: Cannot connect to database. Cannot find alias <db> in

<dir>\tnsnames.ora.

Potential reasons for this error:

- The tnsnames.ora file does not exist in <odb_client_base>\network\admin or that the specified database does. Note that you can specify TNS_ADMIN=\<path> to specify the folder path to the tnsnames.ora file

- The requested database does not exist in the tnsnames.ora file.

Error: ORA-12170: Cannot connect. - timeout of -s for host <ip_address> port <secure_port>.

(CONNECTION_ID=<connection_id>)

Potential reasons for this error:

- The SQL*Plus command cannot connect to the host specified in the TNS or connect string entry

- Check network firewall or host-based firewall ingress rules allow the host of the database client to connect to the host of the database server

- Check the network firewall or host-based firewall egress rules do not allow the database client or server to connect to the database server's host

ORA-12541: Cannot connect. No listener at host <ip> port <port>Potential reasons for this error:

- Oracle database listener is not running or ingress firewall rules (network or host-based) are blocking access to the database port.

Error: ORA-12545: Connect failed because target host or object does not exist

Potential reasons for this error:

- Can’t lookup specified connect string. Check name service resolution in tnsnames.ora or directory service

- Make sure TNS_ADMIN is set to the directory where tnsnames.ora exists

- You get the following Microsoft Entra ID error when you specify an invalid client ID.

Error: ORA-12547: TNS:lost contact

Potential reasons for this error:

- Occurs if you specify PROTOCOL of TCP instead of TCPS in connect string or TNS entry

Error: ORA-12514: Cannot connect to database. Service <service_name> is not registered with the

listener at host <hostname_or_ip_address> port <tcps_tls_port>

(CONNECTION_ID=Kittn+/2RPGXnZPxZAV9Hw==)

Potential reasons for this error:

- Occurs when incorrect SERVICE_NAME value is specified in connect string or TNS entry

Error: ORA-18726: Failed to get a value from an OracleResourceProvider: No implementation of oracle.jdbc.spi.AccessTokenProvider with name: "ojdbc-provider-azure-token" can be located. Implementations of oracle.jdbc.spi.AccessTokenProvider that can be located have the following names : []

Potential reasons for this error:

- In the case of SQLDeveloper, this can occur if the SQLDeveloper product.conf configuration file does not have all of the requisite OJDBC extension libraries required for Entra ID integration. See this blog post (https://www.braddiggs.com/2025/04/entra-id-integration-for-sqldeveloper.html) for details on how to configure SQLDeveloper for EntraID integration

- This can result from using a version of the SQL*Plus client earlier than 23.7.

- Note that if the connect string or TNS entry specifies TOKEN_AUTH=AZURE_DEVICE_CODE , this may indicate that AZURE_DEVICE_CODE is not yet supported

Here is the equivalent Entra ID error when logging in with SQLcl 25.1:

To sign in, use a web browser to open the page https://microsoft.com/devicelogin and enter the code ARSX62NBJ to authenticate.

2025-04-16 09:20:46.198 SEVERE com.azure.core.util.logging.ClientLogger performDeferredLogging Azure Identity => ERROR in getToken() call for scopes [https://dbauthdemo.com/89746215-ea34-4a83-ac0e-46a68de97935/.default]: Failed to acquire token with device code.

Connection failed

USER =

URL = jdbc:oracle:thin:@(DESCRIPTION=(ADDRESS=(PROTOCOL=TCPS)(HOST=hrdb.dbauthdemo.com)(PORT=2484))(SECURITY=(SSL_SERVER_DN_MATCH=TRUE)(WALLET_LOCATION=SYSTEM)(TOKEN_AUTH=AZURE_INTERACTIVE)(TENANT_ID=7f4c6e3e-a1e0-43fe-14c5-c2f051a0a3a1)(CLIENT_ID=e5124a85-ac3e-14a4-f2ca-1ad635cf781a)(AZURE_DB_APP_ID_URI=https://dbauthdemo.com/16736175-ca41-8f33-af0d-4616ade17621))(CONNECT_DATA=(SERVER=DEDICATED)(SERVICE_NAME=pdb1)))

Error: ORA-18951: NL exception was generated: TNS-04610: No literals left, reached end of NV pair

Potential reasons for this error:

- Occurs when attempting to connect via thin client with TOKEN_AUTH=AZURE_DEVICE_CODE and the previous authentication was successful but failed to get token (Error: ORA-18726)

Error: ORA-25704: Token-based authentication requires client parameter SSL_SERVER_DN_MATCH=TRUE.

Potential reasons for this error:

- All Entra ID integration connections between Oracle database client require encrypted TLS connection with SSL_SERVER_DN_MATCH=TRUE

Error: ORA-25707: The token is invalid

Potential reasons for this error:

- If using file-based access token acquisition and refreshing, the token is not present, invalid or expired

- If using memory-based access token acquisition and refreshing, the token may not have been passed because of an issue with TLS configuration setup between the database client and database server.

- Use client and server side tracing to ascertain root cause.

Error: ORA-25714: invalid value of PASSWORD_AUTH or TOKEN_AUTH in the client configuration

Potential reasons for this error:

- OCI driver (e.g. sqlplus) or JDBC-thick driver version used is not supported. SQL*Plus 19.18 or newer is required

- JDBC-thin driver appears to only work with 23.7 or newer

Error: ORA-25718: Invalid value of CLIENT_ID in the client configuration

Potential reasons for this error:

- Occurs when CLIENT_ID is invalid format or bad value in connect string or TNS entry

You may also see Entra ID Error message AADSTS90016:

Error: ORA-25719: Invalid value of TENANT_ID in the client configuration

Potential reasons for this error:

- Occurs if the TENANT_ID is incomplete or invalid format in the connect string or TNS entry

Error: ORA-25726: Invalid value of AZURE_CREDENTIALS in the client configuration

Potential reasons for this error:

- Occurs when no client credentials have been entered for TOKEN_AUTH=AZURE_SERVICE_PRINCIPAL

Error: ORA-25724: Azure AD authentication timeout and Vendor Code: 25724

Potential reasons for this error:

- Occurs when pop-up browser connection to https://login.microsoftonline.com times out after 60 seconds

- Test connection multiple times from your computer to Microsoft login with:

- Linux: curl -ksL https://login.microsoftonline.com/

- Windows Powershell: Invoke-WebRequest -Uri https://login.microsoftonline.com

- Note that web connection test may require going through a web proxy if direct outbound connections are not permitted

- If connection is successful some of the time, there may be a routing or firewall issue between your computer and Microsoft's login page

- If connection fails every time, there is likely either a firewall constraint, routing issue or Microsoft login may be temporarily down

- In Microsoft Terminal Services environments like Microsoft RDP or Citrix, the ODB client redirect to localhost (127.0.0.1) may not be connecting to the user's session real localhost (127.0.0.2-n). The respective terminal services solution will need to be configured to connect the two for the requesting client application. In this case determine what port that the web browser used to connect to localhost after successful EntraID authentication (typically localhost:8400 but can be other number) and then use (netstat -ano | findstr "<port>") to find the process that is trying to connect to that port. Note that both the web browser (Edge or Internet Explorer by default in Windows Server depending on version) and the identified process will need to be added to the Windows or Citrix policy for connecting the virtual localhost (127.0.0.1) and real user session localhost (127.0.0.n). Here is a screenshot from the Citrix policy:

Error: ORA-28547: connection to server failed, probable Oracle Net admin error

Potential reasons for this error:

- Combination ORA and Entra ID Error message AADSTS90002:

or Entra ID Error message AADSTS700016:

- This error occurs if you have not specified the correct TENANT_ID in the connect string or TNS entry

- Entra ID authentication attempt either timed out or failed to authenticate properly

- Occurs when incorrect CLIENT_ID value is specified in connect string or TNS entry

Error: ORA-28759: failure to open file

Potential reasons for this error:

- WALLET_LOCATION of the connect string or TNS entry has specified a wallet path for which no wallet exists

Error: ORA-28864: SSL connection closed gracefully

Potential reasons for this error:

- If you’ve specified the non-secure port number (Default: 1521) instead of the secure port number (Default: 2484) in the connect string or TNS entry

- Note that the default autonomous database uses port 1521 for secure port

Error: ORA-28865: SSL connection closed

Potential reasons for this error:

- The database server may have not yet set the wallet_root directory

- Check to make sure that the wallet has been copied to the PDB GUID directory ($WALLET_ROOT/$pdbguid/tls) on the database server

Set the database server wallet with:

sqlplus / as sysdba

SQL> alter system set wallet_root='/u01/app/oracle/19c/wallet_root' scope=spfile;

SQL> shutdown immediate;

SQL> quit;

lsnrctl stop

lsnrctl stop

sqlplus / as sysdba

SQL> startup;

SQL> alter pluggable database all open;

SQL> alter system register;

SQL> quit;

Error: ORA-29003: SSL transport detected mismatched server certificate.

Potential reasons for this error:

- Occurs the SSL_SERVER_CERT_DN value of the connect string or TNS entry does not match the certificate subject or subject alternative name (SAN) entries of the certificate

- You can use the openssl command of a Linux host to ascertain the certificate and SAN entries of the database server with:

$ echo | openssl s_client -connect hrdb.dbauthdemo.com:2484 2>&1 | openssl x509 -noout -text | egrep -i "DNS|subject"

Subject: CN=hrdb.dbauthdemo.com

Subject Public Key Info:

X509v3 Subject Alternative Name:

DNS:hrdb.dbauthdemo.com, DNS:www.hrdb.dbauthdemo.com

X509v3 Subject Key Identifier:

Error: ORA-29024: Certificate validation failure

Potential reasons for this error:

- This error can be caused by the signing certificate authority not being present in the client host certificate authority trust store

- Use orapki on the database host to display the trusted certificate subject and confirm that this trusted certificate is in the client host

- orapki wallet display -complete -wallet $WALLET_ROOT/tls -pwd <wallet_password>

- In Windows client, run mmc, add “Certificates” snap-in, look up “Trusted Root Certification Authorities” to see if the DN o the certificate authority matches the subject of the same certificate authority from orapki display

- In Linux, return all of the CA certificate subjects with: openssl crl2pkcs7 -nocrl -certfile /etc/pki/tls/certs/ca-bundle.trust.crt | openssl pkcs7 -print_certs -noout

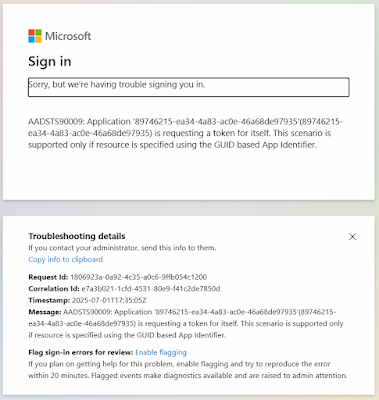

Error: AADSTS90009: Application '...'(...) is requesting a token for itself. This scenario is supported only if resource is specified using the GUID based App Identifier.

Potential reasons for this error:

- This error is caused by specifying the database server's web Application (client) ID for the value of the CLIENT_ID of the connect string or TNS entry. Here is a sample screenshot of this error.